Processing oil and gas production data from downhole gauges

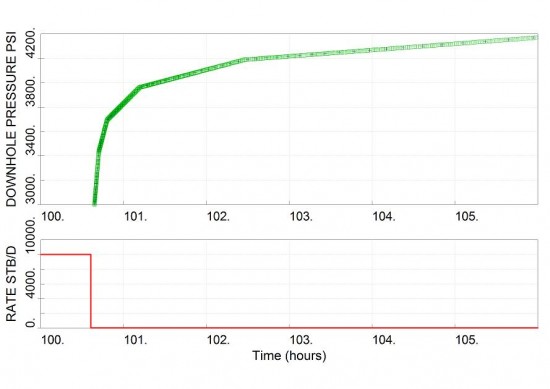

While the permanent downhole gauge records all the data points, measured pressure data at lower frequency are stored in the data processing software, sometimes up to a pressure point every 15 minutes or more. A request for higher data frequency results in a linear interpolation between these fewer stored points. This technique is used to reduce the dataset using a pressure or time window. For example, if the raw pressure doesn’t change more than a certain amount, then the pressure point is not recorded. If a pressure point is not stored after some time, a new pressure point gets recorded, etc…

The PBU derivative will likely be affected by this issue and there is a risk of having useless data from costly downhole pressure gauges.

Difficult to detect this problem

It may be difficult to detect this problem, especially with low data frequency and high permeability reservoirs. In addition, some commercial well test softwares apply some smoothing on the dataset by default. As a result, this problem and others (tidal effects, noise, vibration, changes in fluid density, etc…) may not be visible nor easily detected by the engineer. These data could then be leading to some wrong decisions.

We note that this is a frequent problem in oil and gas companies. High frequency data during shut-in periods need to be retrieved and investigated. The derivative plot and a thorough expert analysis will help.

This problem should be removed and raw data should be accessible to the engineer.

The main purpose of running a downhole pressure gauge is for well test analysis (permeability, well damage, reservoir pressure over time, etc) and long term surveillance, acquiring pressure data from opportunistic (unplanned) shut-ins. Raw data with a pressure point every 15 seconds or less should be available during shut-in periods from downhole gauge data. For high permeability gas reservoirs, raw data should be captured every 5 seconds or less.

This data processing issue could be minimized or be entirely removed. The data sampling requirements must be much greater just before a production shut-in until the end of the shut-in period. The goal should be to acquire suitable raw pressure data every time there is an unplanned shut-in., i.e. capture good quality data during opportunistic shut-ins and be able to continuously learn from the well and reservoir, assess the field development and recovery over time.

Construction and instrumentation teams could help to get the data compression completely removed from the downhole gauges, or to get an algorithm that triggers a change in data frequency based on the wing valve position. Make sure that this problem is recognized and solved before performing a PBU or PFO test.

For more information or for a discussion on this topic, please don’t hesitate to contact us.